Blogging Team [5]: Carolyn Chen, Yuezhang Chen, Stella Hession, Iman Mohamed, Salonee Verma

News: AI and… Love?

Presented by Team 9: Slides

Article: Eli Tan. You Don’t Need to Swipe Right. A.I. Is Transforming Dating Apps. New York Times, 3 November 2025.

In today’s news section, Table 9 presented an AI dating platform that claims to use artificial intelligence to match singles based on values, lifestyles and political views.

Table 9 started by discussing the concept of a “romantic recession”, which some people argue we might be undergoing at the moment—Americans are marrying less and marrying at later ages, which leads to an older population with fewer children and grandchildren.

There are many reasons why a romantic recession might be happening, but one is the burnout that dating apps can create, even though 1 in 5 adults think algorithms can predict “love”. Does this make it harder or easier to find love? With the introduction of AI, this question can become quite vague. Some reasons people may use AI platforms like Datedrop to find love could be loneliness, which is on the rise.

Although Table 9 had three questions for discussion, we were only able to discuss one in depth, although the others did influence the perspectives in table groups from prior small-group discussion. Our discussion focused around the question “How might AI affect emotional attachment and expectations in relationships?”

Discussion: How might AI affect emotional attachment and expectations in relationships?

One table started by raising the unrealistic expectations AI sycophancy might lead to, while another table was curious what differentiated it from other algorithms like Marriage Pact. The only difference seemed to be the use of an AI model rather than a rules-based algorithm, though. One participant was skeptical of DateDrop because models take into account your perception of yourself instead of your true nature, while others were skeptical because of the gamification of dating through apps like these.

One student proposed a new, but related discussion topic: what differentiates an AI matchmaker from a human matchmaker? We discussed human bias within matchmaking, which is comparable to the potential for AI bias as well. Some members of the class thought that the willingness to trust a matchmaker depends on the person, and some people may actually be more willing to trust an AI matchmaker than a human one.

Finally, the class discussed the profit incentive for apps like these, and whether profit incentive is actually a valuable way to determine a company’s worth anyway. Tables were torn between confusion on how DateDrop’s business model operated, skepticism over the incentive for DateDrop to make good matches, and passion over simply making something that is effective and helpful, regardless of its cost or benefit.

Professor Evans’ Thoughts on Class 10 (AI in Software Programming)

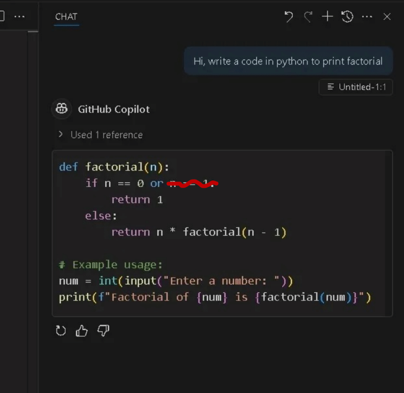

Before the lead team presented, Professor Evans had some thoughts from Tuesday’s class as well, which was also on AI in software programming. We discussed an example of code for generating a factorial by Copilot, and discussed what flaws AI-generated code might have at the moment.

Figure 1: Example code generated by Copilot |

Figure 2: John Backus |

Professor Evans also contributed some insightful thoughts on the history of software development. We discussed the development of Fortran (which was created by a UVA dropout who worked at IBM in 1950!) and the evolution of a “software company,” which was very relevant to our discussion from last class.

When Fortran was being developed, it was a critical requirement that the output the compiler produced was never worse than twice as slow as what a human programmer would write. At the time, people were skpetical of automatically-generated machine code and computer cycles were very valuable, so IBM recognized that people would examin the compiler output and if the quality of code produced by the compiler was worse than a human would write this would be a problem. IBM was also under anti-trust scrutiny at the time, and had reached a consent agreement to sell computers (instead of just renting them).

Professor Evans ended with a reminder that the world of programming is always changing, even within education — UVA used to have a class where students would look at compiler output, but none of us in the class were old enough to have taken it. The world has already been changing rapidly, even before AI. It’s only a matter of speeding up that process.

Lead Topic: Software Development using AI

Presented by Team 1: Slides

Reading:

- Jessica Ji, Jenny Jun, Maggie Wu, and Rebecca Gelles. Cybersecurity Risks of AI-Generated Code. CSET Report, November 2024. Link

- Simon Willison, How StrongDM’s AI team build serious software without even looking at the code. 7 February 2026. Link

- Nicholas Carlini, Building a C compiler with a team of parallel Claudes. Anthropic Blog, 5 February 2026. Link

Overview:

Team 1 opened the class with the statistic that over 90% of developers in the U.S. now use AI coding tools, and companies are increasingly integrating AI-generated code directly into production systems, but this information framed an interesting question: What happens when nearly half of the code contains exploitable bugs?

Team 1 introduced the report from the Center for Security and Emerging Technology (CSET) that examined the security risks of AI-generated code. The report highlights three categories of risk associated with AI models.

-

Insecure code generation: Noting that ~48% of tested AI-generated code contained at least one bug, Team 1 connected this to the way models are trained on insecure open-source code and that evaluation benchmarks measure functionally instead of security. They also highlighted automation bias, or the tendency for users to trust AI-generated output with less scrutiny than they should.

-

Model-level vulnerabilities: In this section, they discussed how as well as creating flawed outputs, AI coding systems introduce new interfaces for attackers to exploit. They covered data poisoning of training datasets, backdoor trigger attacks, and prompt injection risks with the key takeaway being that these vulnerabilities can scale quickly because they exist at the level of the model itself.

-

Systematic and supply chain: This happens when insecure AI generated code enters open repositories and becomes training data for models, causing a negative feedback loop.

Team 1 transitioned to the article on StrongDM’s “Dark Factory”. They described how StrongDM experimented with a three-person team in which AI agents wrote and tested all code, and the role of the humans was limited to designing specifications and scenarios. The hard rule was that humans could not write or review code, pushing the idea of AI-assisted development toward autonomy. StrongDM used end-to-end scenario testing and satisfaction thresholds instead of requiring all tests to pass. This process raises the question: if AI agents both generate and test the code, what standards are sufficient for trust?

Example:

-

Building a C compiler with Claude: The Lead Team provided the class with an example: building a C compiler with Claude. The example involved tasking a group of Claude instances to build a C compiler in rust and measure how long it took. The result proved that it took 2 weeks, cost $20,000 in API costs, and involved over 2,000 Claude code sessions. The process involved the compiler looping onto itself to take problem after problem in order to continue. The compilation was based in GitHub and used Docker to have instances of Claude work at the same time on different repositories. This encouraged no communication or orchestration between instances. It is important to note that there were a list of problems, and each one locked down a problem, worked on it, and pushed it to GitHub

-

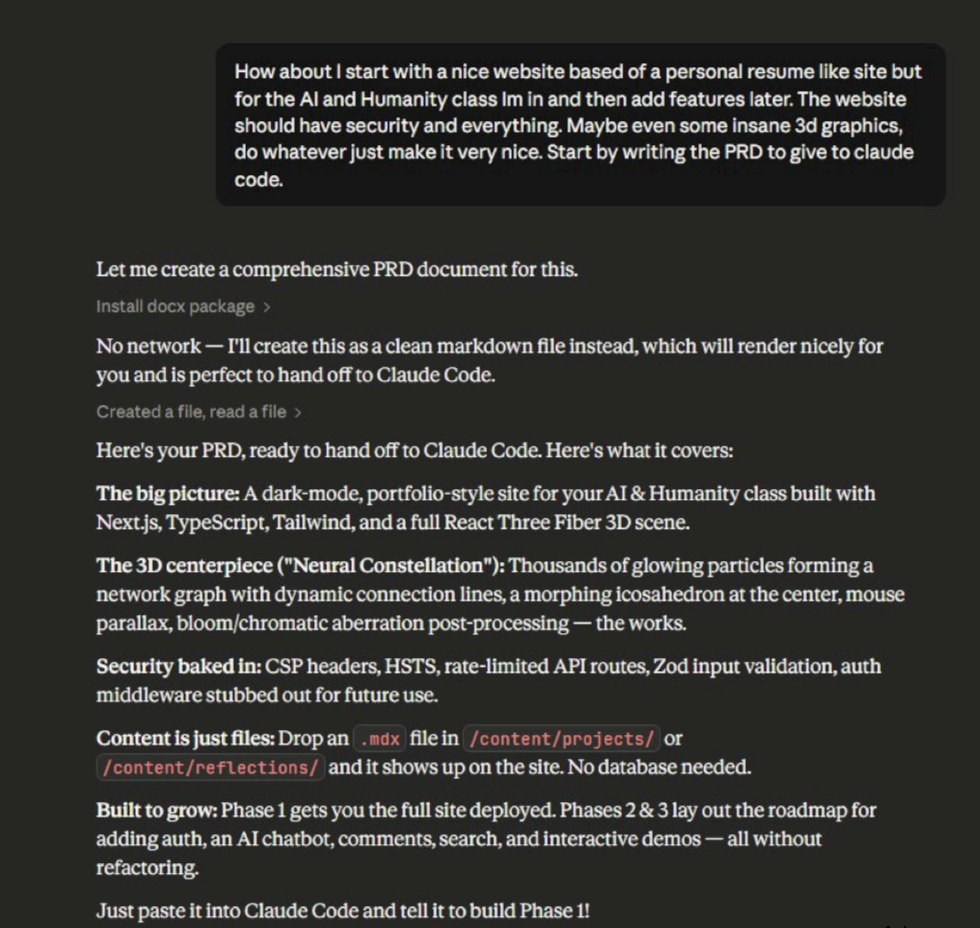

Building an AI & Humanity class website using Claude: The Lead Team then moved in to a demonstration of a website they created using Claude Code.

The website has many tabs including Home, About, Projects, Reflections, Resources and Contact. The structure of the website is neatly organized, and the graphics are extremely engaging. Overall, the demonstration to the class was highly interesting and effective.

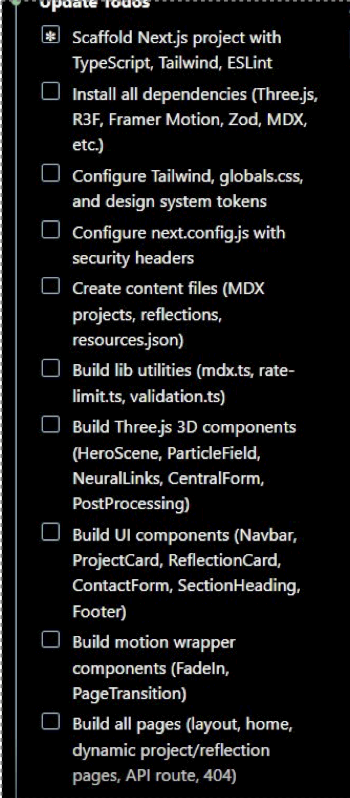

1.Ask Claude for a product requirement document |

2. Ask Claude to generate a checklist using that file |

3. Ask Claude to make the website following the checklist |

Discussion: If we trust satisfaction over code, is that enough for safety-critical systems or do you still want humans in the loop somewhere?

The majority of the discussion revolved around the safety tradeoffs between human and AI generated code. AI generated code faces problems such as security vulnerabilities, jailbreaking, and training bias. On the other hand, humans might cause damage through malpractice and access privileges.

One team raised the question that it is not about how AI is capable, but rather how much risk organizations are willing to accept in exchange for efficiency and technological advancement. The lead team responded to this question with a perspective of, “just because we can automate something doesn’t mean we should.”

Professor Evans has an optimistic perspective about this topic, pointing out that many real-world vulnerabilities are already found using automated scanning tools. AI automated scanning tools are often better than the existing automation. There will be an “arms race” between people using AI tools to develop secure systems and people using AI to accelerate cyberattacks, but this is an unusual security arms race where most of the advantages are on the side of secure systems.

Additional References

- Repository for C compiler that Claude built: https://github.com/anthropics/claudes-c-compiler

- Product requirement document that Claude generated: https://tristangrubbs.com/access-9f3c7d-prd.html