Blogging Team 11: Rimon Ghebremeskel, Tapi Goredema, Yi Ping, Elijah Smith, Samrawit Yemane

From FARS to Vibe Research

Sources:

- Analemma Intelligence, Introducing FARS. 2026.

- European Central Station (36Kr). 228 hours of non-stop work to produce 100 papers, burning through 11.4 billion Tokens: FARS has gone crazy. 25 February 2026.

At the beginning of 2026, a Chinese company called Analemma finished its first experiment on the Fully Automated Research System, FARS.

FARS is an AI system that can run the whole research loop by itself, including reading papers, finding ideas, writing code, running experiments, and writing papers. FARS ran about 417 hours, costing $186,000, and wrote 166 papers.

Based on the result from Stanford’s Agentic Reviewer, the papers received an average score of 5.05. This is between the public average submission score for ICLR 2026 (4.21) and the average accepted score of ICLR 2026 (5.39). That means even if the system did not produce top-tier conference papers on average, it also was not just generating nonsense. Though this is an interesting find, it still raises questions about the validity of these scores, since the reviewer itself is also an AI system, meaning the evaluation may not reflect how human experts would judge the work.

The large number of papers and relatively low cost per paper (around $1120) are only part of why FARS attracted so much attention. The more crucial reason is that FARS makes a new way of doing research, vibe research, feel possible.

Vibe research is different from traditional research, where humans do each step by themselves in several months, and it is also different from ordinary AI-assisted research, where AI mainly helps with isolated tasks such as searching for related papers, polishing writing, or programming. In vibe research, AI takes over the whole research workflow, and humans only set directions, supervise the process, and (optionally, as it turns out) check the final results.

Vibe research currently is mainly focused on AI, especially in NLP and LLM research. The first reason is that the whole workflow of NLP/LLM research can be done inside the computer. The systems do not need access to the physical world. They only need to read papers, find ideas, do experiments, and write papers. These tasks can be quickly finished by AI systems. Secondly, the NLP/LLM fields have a great amount of data, including open-sourced papers, repositories, datasets, and relatively clear benchmarks. That means the systems have enough data to be trained and optimized. Thirdly, companies have incentives in these fields. If a company can use AI to improve AI faster, the payoff directly feeds back into the model itself.

Case Studies of Vibe Research

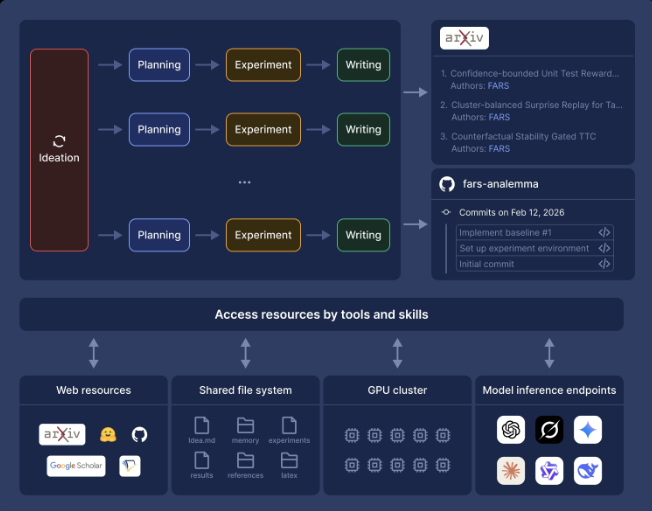

FARS is a fully integrated system that operates on four specialized agents to handle the entire research process: Ideation, Planning, Experimentation, and Writing. This includes coming up with hypotheses, planning actionable outcomes, constructing experiments for collecting results, and finalizing the outcomes into a complete paper. All of this, crucially, is done without an iota of human intervention. However, FARS is not the only of its kind. There exist other AI research systems that push the process further.

Figure 1: FARS organizes research into an end-to-end pipeline that moves from ideation to planning, experimentation, and writing while drawing on external tools, compute, and online resources to produce papers and code.

Source: Analemma: “Introducing FARS”

Google’s AI co-scientist works in a particularly different way. Rather than replacing researchers, it is designed to assist them with generating hypotheses and research proposals and overall being a “virtual scientific collaborator,” in Google’s own words. Similar to FARS, it acts in a multi-agent network that iteratively creates scientific proposals and ranks them based on automated feedback to further refine them. According to Google’s own testimony, based on expert evaluations, it was found that the outputs of ideas generated by the AI co-scientist were preferred when compared to other similar models. Furthermore, when probed to assist with predicting drug repurposing opportunities, its findings were validated through experimentation and clinician feedback.

Sakana’s The AI Scientist is another automated research system that serves as a critical precursor to FARS. It goes through a similar process of generating ideas, iterating via experimentation, conducting a literature review, and finally writing complete reports. Notably, a later version was announced by Sakana to have produced three papers that were then submitted to an ICLR 2025 workshop for double-blind peer review. These submissions were done in cooperation with the workshop’s organizers to see how fully AI-driven research papers would perform under an anonymous peer review process. Out of the three papers, one titled “Compositional Regularization: Unexpected Obstacles in Enhancing Neural Network Generalization” was claimed by Sakana to have successfully passed peer review with a score of 6.33, which is higher than many of the other human-written papers. They later gave an ICLR presentation detailing the entire process. It is important to note that this workshop’s review process is less rigorous than that of a meta-review conducted in an ICLR conference (of which Sakana has admitted that this paper would likely fail). Additionally, although the paper was stated by Sakana to have been accepted, it was decided alongside the ICLR organizers to withdraw the paper before it could be published.

“[I]t was determined ahead of time… we would withdraw [the papers] before they were actually published. This is because they were AI-generated, and the AI and scientific communities have not yet decided whether we want to publish AI-generated manuscripts in the same venues.”

-Sakana AI, “The AI Scientist Generates its First Peer-Reviewed Scientific Publication”

Nonetheless, as AI-driven research becomes more and more refined, autonomous systems such as The AI Scientist may, in the near future, end up producing research that become indistinguishable from its human counterparts.

Finally, Biomni is an example of taking general AI-driven research into domain-specific scientific practice, particularly biomedicine. Using a unified environment containing 150 specialized tools, 105 software packages, and 59 databases, Biomni was reported to outperform baselines across diverse biomedical tasks, where it even matched or exceeded human-level performance. This suggests that AI systems are shifting from applications that can not only theorize but also produce actionable, meaningful scientific outputs.

Taken together, these systems show that AI-driven research, although varying in specialized fields and applications, is altogether moving in the same direction. FARS emphasizes autonomous scale and throughput, Google’s AI co-scientist hones in on collaborative hypothesis generation and validation, Sakana’s The AI Scientist focuses on automating paper production, and Biomni is about specialized scientific work and capabilities. Collectively, they are steps toward AI collaboration in science in multiple, complementary roles rather than a single automated model.

What outsiders are saying: optimism + skepticism + transparency

More and more AI leaders are starting to talk about automated research as something that is close, not far off.

Sam Altman has suggested that systems capable of producing real, new insights could arrive as early as 2026 (The Gentle Singularity, Altman). Dario Amodei makes a similar point, especially in biology, where he believes AI could help drive major breakthroughs by taking on larger parts of the research process, not just small tasks (Machines of Loving Grace, Amodei). At a more practical level, tools like Andrew Ng and Yixing Jiang’s Agentic Reviewer already show how AI can help researchers move faster, with results that line up closely with human reviewers. Overall, the shift is clear: AI is no longer just helping with research—it is starting to change how research gets done.

At the same time, the biggest concern is not whether AI can do research, but whether we can trust it while it does. Research from Anthropic’s Alignment team shows that models can quietly underperform or make misleading choices without being easily noticed, which raises real concerns about reliability. Their Claude 4 system card even outlines a point where AI could fully take on the role of an entry-level researcher, and connects that level of autonomy to potential risks, especially if it speeds up AI development itself. Even people who are optimistic about these systems recognize this tension. The same tools that could speed up discovery could also create problems if they are not carefully monitored and guided.

Because of both the excitement and the concerns, institutions are already starting to adjust. A Nature Machine Intelligence editorial highlights the need for clearer explanations when using AI systems in research, especially to avoid wasting time and resources on unreliable results.

Conferences are also responding. For example, ICLR 2026 now requires authors to clearly disclose how they used large language models in both papers and reviews. It warns that failing to do so or submitting AI-generated content that is misleading can lead to rejection. These changes show that AI is already reshaping research norms, even as the rules are still being figured out.

Implications for research, labor, review, and governance

As AI research systems take on more of the research workflow, they will increase the number of new ideas researchers can explore, which, in turn, will place greater importance on higher-level human judgment. In Vibe research, AI agents will handle more hands-on tasks, such as paper research, experiment setup, code execution, and drafting, while humans remain responsible for directing the process and deciding whether the outputs are credible, valuable, or worth pursuing further. This suggests an important shift in which skills are valued for students and junior researchers, with less emphasis on skills like being detail-oriented when performing each step manually, and an increased need for problem framing, verification, and research judgment. AI research systems may make research faster and more accessible, allowing us to gather knowledge much more quickly, but it also makes human insight and judgment much more important.

AI’s increasing presence in research will likely allow project leads, labs, and research startups to run many more projects in parallel and increase the overall speed of the search output.

Systems like FARS drew attention because they showed how research tasks could be organized in a queue-like workflow, producing outputs continuously. However, this increase in throughput also creates a new challenge. With an increase in research output, the main bottleneck shifted from just generating ideas to building the infrastructure needed to test, filter, and verify which results are actually meaningful as well.

Google’s AI co-scientist and Biomni both reinforce this point by emphasizing testable hypotheses and lab validation (Google Research, Biomni), showing that scientific value still depends on careful verification rather than idea generation alone. AI may therefore help research organizations scale more efficiently, but it also raises the importance of strong validation systems.

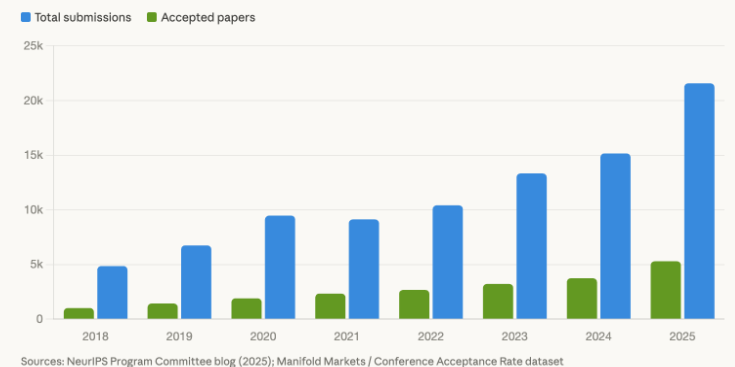

Peer review and academic publishing are already under strain, and automated research systems could make those pressures even harder to manage. Major conferences have seen a sharp rise in submissions, making it more difficult to recruit qualified reviewers and maintain a consistent standard of evaluation. NeurlPS reported that submissions increased from 9,467 in 2020 to 21,575 in 2025. At the same time, ICLR 2026 has already had to respond to LLM-generated papers and low-quality AI-generated reviews, warning that misleading or undisclosed AI use may lead to desk rejection or sanctions. The growth of AI-generated research is not only increasing the volume of material that reviewers and publishers must handle, but it is also deepening concerns about transparency and trust in the review process.

Figure 2: Bar chart comparing submitted research and accepted over the last 7 years (Graph generated with Claud and NeuralIPS Program Committee Blog)

The above figure shows how NeurlPS submissions have grown from 4,856 in 2018 to 21,575 in 2025, which is a 4.4x increase in the last seven years. It illustrates the growing strain on the peer review system at a time when AI-generated submissions are on the rise.

Additionally, access to automated research may not be distributed evenly, and that can create larger gaps between well-resourced institutions and underfunded ones. Some of the most visible systems in this space already depend on significant computer power, tool access, and operational infrastructure.

FARS relied on a 160-GPU cluster and substantial token spending, while OpenAI has described deep research as very computing-intensive. Anthropic also treats advanced autonomous research and development as a capability threshold that requires stronger safeguards, which further suggests that these systems are not lightweight tools that every group can adopt equally.

This means that the future advantages of automated research may depend on who can afford the computing infrastructure and oversight needed to use these systems effectively. For funders and universities, the challenge may therefore be not only supporting innovation, but also preventing these capabilities from deepening existing inequalities between research groups.

For AI companies and platform builders, the opportunity may be less about selling a single model and more about selling complete research infrastructure. The most important systems in this space are no longer standalone tools, but broader platforms that combine agents, tools, external data, and execution environments into a unified workflow. This creates a strong commercial opportunity, since companies can market themselves as providers of the infrastructure needed to automate major parts of research. At the same time, Nature’s transparency editorial and Anthropic’s autonomy thresholds suggest that research automation must also be treated as a governance problem involving provenance, auditability, and safety evaluation. As a result, companies building these systems may be judged not only by how capable their platforms are, but also by how well they make them transparent, accountable, and safe in research contexts.

For the public and policymakers, the potential upside of automated research may be especially large in fields such as science and medicine. Systems like Google’s AI co-scientist and Biomni suggest that AI could help generate useful biomedical hypotheses, support complex research tasks, and accelerate parts of scientific discovery that would otherwise take much longer. Dario Amodei makes a similar point, arguing that biology and health are among the domains with the greatest potential benefit from powerful AI. At the same time, concerns raised by ICLR and Nature show that institutions are already worried about disclosure, false claims, and wasted human or computational effort. This means that the public benefits of automated research may be substantial, but only if accountability, transparency, and oversight scale alongside capability.

Conclusion

The next stage of this shift will likely not be a sudden moment where AI fully replaces scientists. Instead, it will happen through gradual changes in how research is organized. Systems like FARS, Google AI co-scientist, The AI Scientist, and Biomni suggest that more parts of the research process can now be broken into repeatable workflows and delegated to AI systems. That means the biggest change may be less about whether AI can generate papers and more about how labs, conferences, and universities adapt to research being produced faster and at a much larger scale.

One likely result is that the role of the human researcher will shift. Instead of performing every step manually, researchers may spend more time setting goals, checking outputs, interpreting results, and deciding which directions are worth pursuing. In that sense, AI may increase the value of judgment rather than eliminate the need for human researchers. At the same time, this transition will create pressure on institutions to build stronger norms around disclosure, verification, and accountability. If those systems do not improve quickly enough, the research world may face more low-quality work, more review overload, and more uncertainty about what can actually be trusted.

For that reason, the most important question going forward is not simply how powerful AI research systems become. The more important question is whether the institutions that evaluate and govern science can keep pace with them.

Sources:

-

Altman, Sam. “The Gentle Singularity.” Sam Altman, 10 June 2025.

-

Amodei, Dario. “Machines of Loving Grace.” Dario Amodei, Oct. 2024.

-

Anthropic. “Anthropic’s Transparency Hub.” Anthropic, last updated 10 Feb. 2026.

-

Anthropic Alignment Science. “Automated Researchers Can Subtly Sandbag.” Alignment Science, 2025

-

Biomni. “About Biomni: Automating Biomedical Research with AI Agent.”

-

Huang, Kexin, et al. “Biomni: A General-Purpose Biomedical AI Agent.” PMC, 2 June 2025.

-

“ICLR 2026 Response to LLM-Generated Papers and Reviews.” ICLR Blog, 19 Nov. 2025.

-

Jiang, Yixing, and Andrew Ng. “Tech Overview.” Stanford Agentic Reviewer, n.d.

-

“Multi-Agent AI Systems Need Transparency.” Nature Machine Intelligence, 27 Jan. 2026