Blogging Team [8]: Nifasha Diomede, Ariana Elahi, Alyssa Rodrigues, Yusaf Sharif, Maryam Younis

Midcourse Correction

Dave’s Slides: Class 14 Slides (PDF)

Before diving into the discussion, Professor Evans went over the plan for the class moving forward to account for mid-course corrections and included an interesting recap of his own Spring Break. Over break, Prof. Evans went to San Francisco for a hearing in Amazon’s suit against Perplexity (he is an expert witness in the case).

As far as the Mid-Course Survey results, 46 out of the 60 students in the class filled it out, and 93% of those students were happy with their existing table teams.

Prof. Evans clarified the expectations for discussion groups, which is not meant to be a strict assignment graded on a weekly basis, but an authentic, organic source of discussion on the readings and class materials. However, it is important to note, we are not being graded on any solidly defined criteria to encourage these discussions. Prof. Evans also shared responses on the slides from students about their feelings on their online discussion groups and the in-class seating arrangements. Some people feel the class has been feeling a bit sparse, but the majority would like to continue sitting in their table teams. Professor Evans will begin rearranging tables at the start of class so that groups are not seated at “small” tables.

Prof. Evans encouraged teams leading classes to be more creative and to think of alternative structures that may work better than the typical slides-and-discussion-questions format all teams up to this point have followed. He appreciated the reminder and request to enforce the policy that laptops should only be open for reasons that contributed to the class. This means that if a laptop is open, students should expect to be asked to share what is on their screen with the class. We will occasionally have five minutes at the end of each class reserved for project teams to briefly meet, but also note that the classroom is available and teams can use this time and space to coordinate before class. Based upon other requests and a sense of how the class is going, the promised midterm will not be a formal test, but should be a worthwhile class instead.

Expectations for upcoming News and Lead times have been updated, see the Class 14 Post.

Evans also shared a meta talk on presenting, emphasizing that slides should not contain all the information so that the presentation itself remains important. Overall, the expectation is to prepare earlier, seek feedback, be more creative, and follow improved presentation guidelines.

Professor Evans discussed strategies for giving effective presentations, emphasizing that talks should tell a story rather than simply presenting lists of bullet points. While lists are occasionally appropriate, the goal should be to avoid relying heavily on bullets. He referenced a story from Patrick Henry Wilson, in which a student asked for feedback on their slides and Wilson responded, “Too many slides, too many words,” without even seeing the presentation and explained that this is always the case.

Evans also stressed the importance of not reading directly from slides during presentations. If presenters need to read a quote, they should read it from paper rather than from their phone.

He explained that improving presentation skills requires intentional effort: observing other presenters, practicing regularly, and reflecting on both explicit and implicit feedback. The motivation to improve comes from respecting the audience, valuing the content, and developing one’s own skills.

-

The talk by Patrick Henry Winston on How to Speak is here: https://www.youtube.com/watch?v=Unzc731iCUY. Everyone is highly recommended to watch this (now, and at least once each year)!

-

Meta Talk: How to Give a Talk So Good You’ll Be Asked to Give Talks About Nothing: [Notes] Slides: [PPTX] [PDF].

Lead Team 4 Topic: Autonomous Weapons & AI-Enabled Warfare

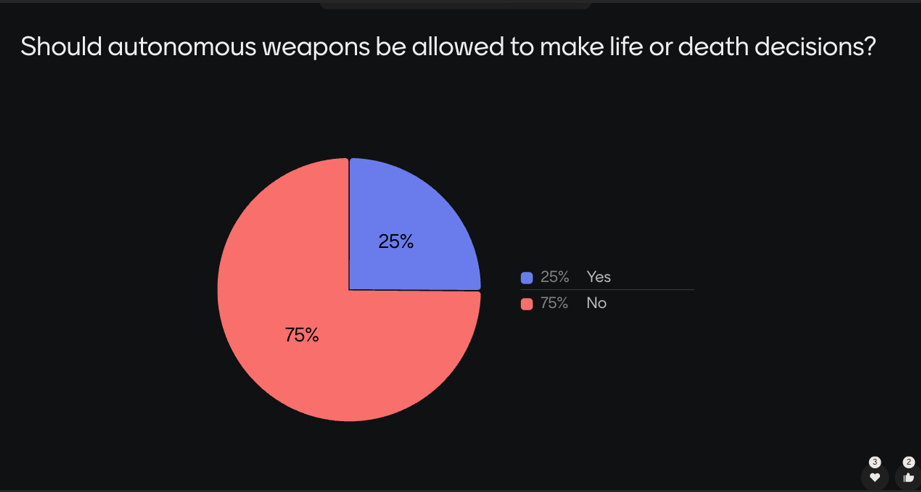

Team 4 presented on autonomous weapons and AI-enabled warfare. They clarified that autonomous weapons are not necessarily the same as AI weapons. Autonomous systems can incorporate AI, but they do not always require it. The class discussed whether autonomous weapons should be allowed to make life-or-death decisions, with 75% of students responding that they should not and 25% responding that they should.

Figure 1: Pulled from class poll

The presentation introduced key legal principles from International Humanitarian Law relevant to autonomous weapons, including the ability to distinguish civilians from combatants, the principle of proportionality in attacks, and the issue of accountability for the use of force. The team also summarized arguments for limiting the autonomy of AI-enabled weapons, including concerns about false positives, automation bias among human operators, and the difficulty of assigning responsibility when accountability becomes diffused across many actors.

Team 4 continued their presentation on autonomous weapons and how the U.S. government has responded to their development. Some proposed solutions included International Humanitarian Law (IHL) compliance and maintaining human judgment oversight. They also discussed issues with banning autonomous weapons, especially because there is no agreed definition of what counts as an autonomous weapon, which could create confusion and limitations for enforcement. This definitional problem is still active in international negotiations. The UN Convention on Certain Conventional Weapons Group of Governmental Experts on lethal autonomous weapon systems met during March 2-6, 2026, and is scheduled to meet again from August 31 to September 4, 2026. The International Committee of the Red Cross has also called for legally binding rules to preserve human control over the use of force. This shows that the policy question is harder than simply asking whether autonomous weapons should be banned. The governments also have to agree on what systems count, what human control requires, and how rules would be enforced. [UNODA 2026] [ICRC 2025]

The presentation referenced the National Security Commission on Artificial Intelligence (NSCAI) and emphasized that AI may be one of the most consequential inventions of this age. The U.S. Department of Defense is shifting resources toward AI development, particularly as competition with China’s People’s Liberation Army (PLA) grows.

Figure 2: The Commission does not support a global prohibition of AI-enabled and autonomous weapon systems.

(Source: National Security Commission on Artificial Intelligence, 2021)

Team 4 also introduced the trolley problem to explore ethical dilemmas in autonomous decision-making using Menti polls. Students in the class responded and shared examples while the scenarios were presented, highlighting that real-world decisions are more complex than the binary choices autonomous systems often rely on.

We briefly discussed modern warfare challenges, such as combatants blending in with civilians, and questions of accountability if an autonomous weapon causes civilian harm after being pre-authorized. One TA pointed out that the judicial system is the most conservative branch, meaning it typically takes a long time to establish legal precedent, especially in new areas like AI, where there is not yet a large history of court cases to draw from.

A key issue with autonomous weapon systems is assigning accountability because many people are involved in their development and operation. One discussion question asked whether AWS should be deployed if testing shows they reduce civilian casualties by 40%. Some argued that if the goal is reducing civilian harm, AWS should be considered since human systems already make serious mistakes, such as missile misidentification causing many civilian casualties. Others emphasized that responsibility and oversight remain major concerns even if performance improves.

Figure 3: Professor Nehal Bhuta

(Source: Edinburgh Law School, 2021)

Another question considered whether AI should ever be allowed to authorize lethal force without human approval. Some argued this should never happen due to risks like hacking and the need for human oversight. Others suggested that if AI can make better decisions than humans, greater autonomy might eventually be acceptable as a “lesser evil”.

We ended the class on the issue of whether the “black box” nature of AI conflicts with International Humanitarian Law. This looped us back to our discussion on Professor Nehal Bhuta’s views, “There is a risk that accountability becomes so diffuse that it’s hard to identify the individuals or groups of agents responsible for violations and failures. This is a common problem with complex modern technologies”. We ended on the importance of accountability and how new frameworks will have to emerge to solve the accountability problem.

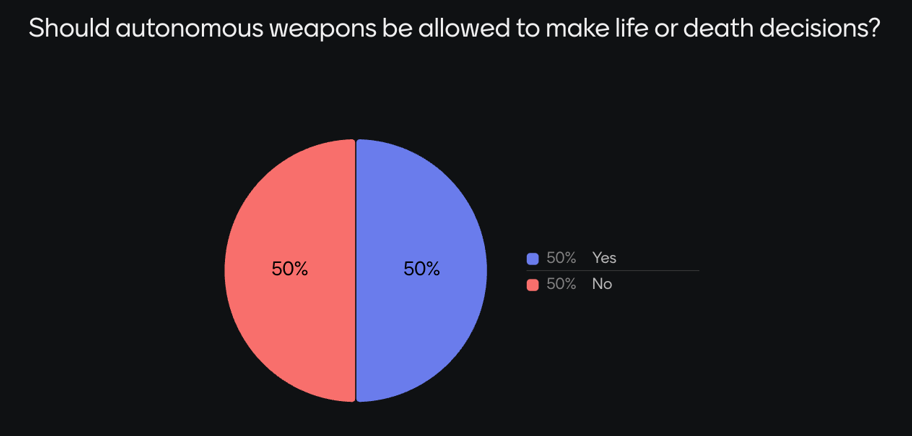

Figure 4: Re-asking the first question.

Lastly, the same question that was asked in the beginning of the class was asked again at the end and results show a 25% shift in opinion towards allowing autonomous weapons to make life-or-death decisions.

Sources:

- Fleming, N. (2025, October 29). Why we should limit the autonomy of AI-enabled weapons. Nature. https://www.nature.com/articles/d41586-025-03357-1

- National Security Commission on Artificial Intelligence. (2021). Autonomous weapon systems and risks associated with AI-enabled warfare (Chapter 4). In Final report. https://reports.nscai.gov/final-report/chapter-4

- Team 4, autonomous weapons and AI-enabled warfare, 2026

- United Nations Office for Disarmament Affairs. Convention on Certain Conventional Weapons — Group of Governmental Experts on Lethal Autonomous Weapons Systems (2026). https://meetings.unoda.org/ccw-/convention-on-certain-conventional-weapons-group-of-governmental-experts-on-lethal-autonomous-weapon-systems-2026

- International Committee of the Red Cross. (2025, May 12). Preserving human control over the use of force: A call to regulate lethal autonomous weapon systems under international law. https://www.icrc.org/en/statement/preserving-human-control-over-use-force-call-regulate-lethal-autonomous-weapon-systems

Additional Sources:

- Jennifer Belissent. (2026, January 28). Mind the gaps — and the hype — to navigate AI opportunities. Forbes. https://www.forbes.com/sites/snowflake/2026/01/28/mind-the-gaps---and-the-hype---to-navigate-ai-opportunities/

- Defence Transition (2025) . The Path to an Innovative and Lethal Military. Memos of the President. https://www.scsp.ai/wp-content/uploads/2025/01/Defense-Memo.pdf