Blogging Team 9: Shriya Ramaka, Amita Pavuloori, Eva Butler, Ted Nyberg, Christine Cheung

Lead Topic: Can We Survive Technology?

News: OpenAI’s Agreement with the Department of War

Presented by Team 12: Slides

-

Tensions between the Pentagon and AI giant Anthropic reach boiling point. Jared Perlo and Gordon Lubold. NBC News, 20 February, 2026. Link:NBCNews

-

OpenAI reaches AI agreement with Defence Dept. after Anthropic clash. Cade Metz. New York Times, 27 February, 2026. LinkNYTimes

On February 27, OpenAI agreed to a deal with the Department of War (DoW) which was amended on March 2.

Figure 1: Sam Altman of OpenAI. Source: TED

In July 2025, Anthropic became the first AI company awarded a $200M contract to work with the Pentagon in classified networks. However, recently, relationships between the entities have soured. In January 2026, Anthropic, led by Dario Amodei, began speaking out in favor of safety regulations. They wanted to define their own guardrails and prevent Claude from being used in domestic surveillance and autonomous weapons.

In February 2026, tensions continued to rise between Anthropic and the DoW due to Claud’s usage in the US operations in Venezuela. Meanwhile, OpenAI committed to doing more work with the Pentagon.

On February 24, Secretary of War Hegseth demanded that Anthropic give full access to their AI model under threat of deeming Anthropic a “supply chain risk”. This classification posits that the vendor introduces strategic or security vulnerabilities into a system due to lack of trust or compromise. It would bar government contractors from using the model.

On February 27, the DoW announced that Anthropic was a supply chain risk. Anthropic stated that the DoW continues to use the AI for autonomous weapons and target selection. Later on February 27, Anthropic announced that a deal with the DoW had been reached allowing for usage in classified systems. This deal is still pending, and Anthropic models are being phased out over the next 6 months.

That same day, February 27, OpenAI reached a deal with the DoW to use OpenAI’s models in classified settings for “any lawful purpose”. There were technical guardrails and OpenAI employees would work alongside government employees.

On February 28, the deal was amended to prohibit “intentional” government surveillance of US citizens.

On March 9, Anthropic sued the US government over the supply chain risk label. They claim their First Amendment right to free speech was violated, and that they had attempted to compromise with the government, challenging the government’s claim that Anthropic is untrustworthy.

Discussion

The news team opened by presenting OpenAI’s deal with the Department of War and the contrast with Anthropic’s approach. Anthropic sets explicit safety guardrails before any agreement, while OpenAI’s method is more flexible. One participant highlighted this difference directly, pointing to Sam Altman’s idea of “writing limitations into the stack.” The news team pushed back hard on this: unlike a formal policy, code can be quietly changed, meaning OpenAI could incrementally give the government more and more leeway until something technically illegal becomes technically possible.

A classmate argued that Americans have no real right to privacy in public spaces, so the government can already lawfully deploy AI for surveillance, and he’d personally rather have the government doing it than a private company, since the government is more heavily regulated. The news team countered that OpenAI employees can view user chat history and that OpenAI does not hold security clearances — the contract allows the model to run in secure settings, but that’s not the same as authorizing classified data uploads. Another classmate seemed to think the government’s clearance infrastructure made this safe; Professor Evans clarified that yes, the government takes protecting classified data seriously, and would not allow classified information to be uploaded to public web interfaces, and the contract for enabling classified use of OpenAI’s models would mean running the models within a protected environment where all of the staff have clearances.

On the industry side, the news team noted that Microsoft, Google workers, and OpenAI workers have all filed amicus briefs backing Anthropic, calling the supply chain risk designation an overreach. The government’s argument is that Anthropic could pull access at any moment, making it an unreliable partner, but we all found this reasoning questionable.

A common thread running through the discussion was unease. If the government is gravitating toward the AI company with looser restrictions, and if “lawful purposes” can be quietly redefined over time, the class felt that was a genuinely worrisome trajectory, especially if surveillance is already on the table.

Main Topic: The Evolution of Nuclear Technology and Its Regulation

Presented by Evans and Team 5: Slides PDF

Before the Lead team’s presentation, Professor Evans spoke about the history of nuclear weapons development and regulation.

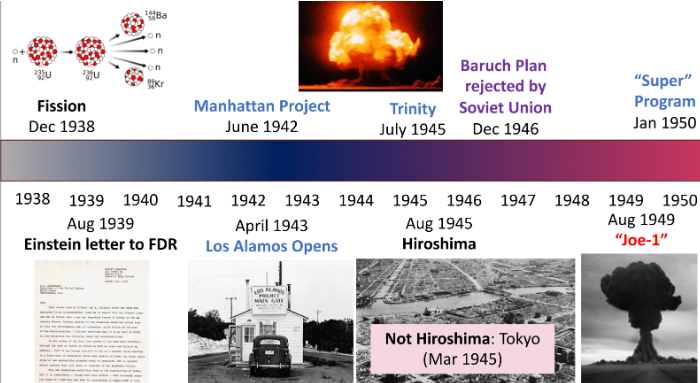

Figure 2: Nuclear testing timeline. Source: Evans

Nuclear technology advanced extremely quickly in the mid 20th century, moving from the discovery of fission in 1938 to fully developed atomic weapons within just a few years. The Manhattan Project led to the first successful test (Trinity), followed by the nuclear detonations over Hiroshima and Nagasaki. By 1949, the Soviet Union had developed its own bomb, beginning a nuclear arms race that pushed rapid scientific and military innovation.

Figure 3: Richard Garwin receiving the Medal of Freedom. Source: Washington Post

Figures like Richard Garwin were not just involved in building nuclear technology but also in trying to control it. Garwin helped design the hydrogen bomb, a role that was largely hidden from the public and even his own family. At the same time, he advised presidents and spent much of his career working to reduce the risks of the technology he helped create. We also learned that Professor Evans worked with Garwin (who died last year), which made this feel especially real.

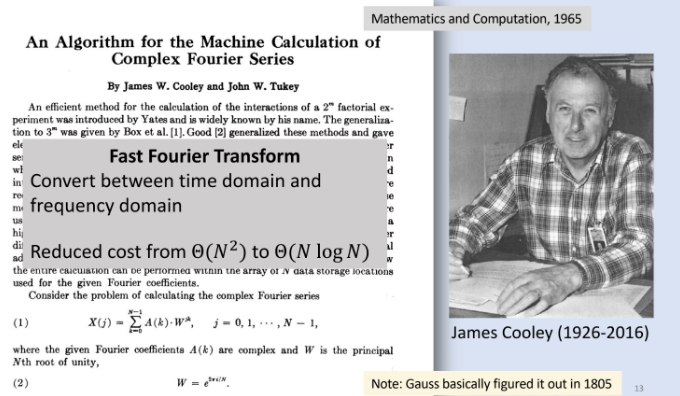

Figure 4: Cooley Fast Fourier Transform. Source: Evans

In class, we also learned that the reason underground testing was not banned in the original nuclear testing agreements was because there was no reliable way to detect underground nuclear tests. To have a verifiable bad on underground nuclear testing, scientists needed a way to detect underground nuclear explosions. Seismic waves from underground explosions could be measured and analyzed, but identifying a nuclear explosion in seismic activity would have required more computation than seems feasible. The Fast Fourier Transform made this possible by converting signals from the time domain to the frequency domain, allowing researchers to distinguish nuclear tests from natural earthquakes. This was especially important because the United States needed a reliable way to determine whether other countries were secretly testing nuclear weapons.

These developments eventually led to the Comprehensive Nuclear-Test-Ban Treaty (1996), which aimed to ban all nuclear testing. We also discussed how the New START Treaty, the last major agreement limiting nuclear weapons between the United States and Russia, expired on February 5, 2026. This shows how fragile these systems are and why monitoring technologies are still so important.

All of this builds into the bigger question from the reading: can we survive technology?

- John von Neumann, Can we Survive Technology?, Fortune Magazine, June 1955. [PDF]

Techno-Optimism vs. Doomerism

Recap of the Two Articles:

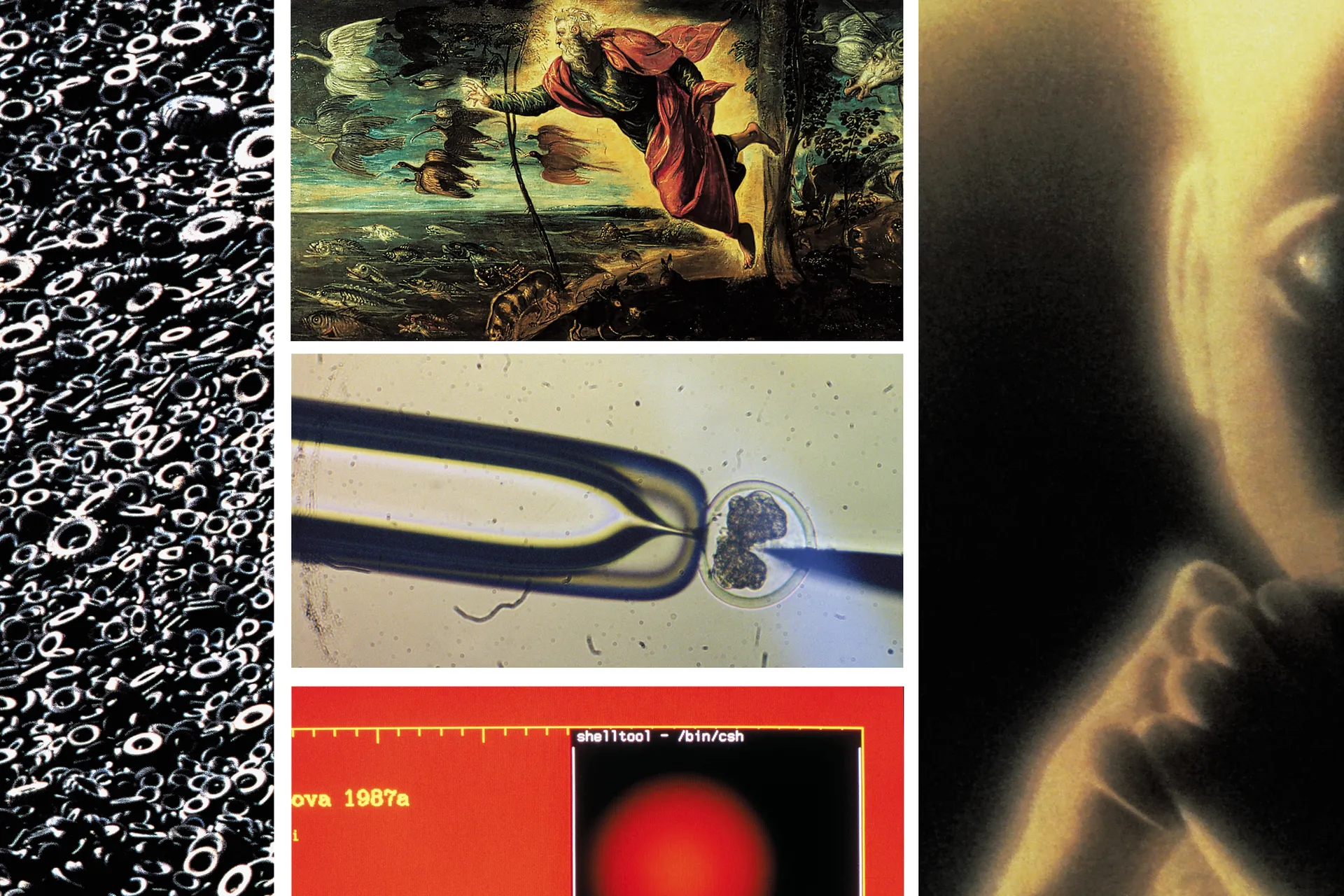

Marc Andreessen’s “Techno-Optimist Manifesto” details a strong argument in favor of going all-in on technology and not being afraid of it. He pushes back against a lot of common fears about AI, automation, and innovation, arguing that technology has historically been the main driver of progress, better living standards, and economic growth. According to him, instead of slowing things down with regulation or worrying too much about risks, we should actually be accelerating technological development because it’s what solves big problems and creates abundance. He also emphasizes that free markets play a huge role in this, since competition and innovation naturally lead to better solutions and more opportunities. AI, in particular, is framed as a really powerful tool that can expand human potential and help tackle global challenges. Overall, the manifesto feels very confident, even kind of bold, in claiming that technology isn’t something to fear, but something that will continue to improve human life if we let it move forward without too many restrictions.

Figure 5: Why the Future Doesn’t Need Us. Source: Wired

In a more pessimistic view, Bill Joy’s article, “Why the Future Doesn’t Need Us,” offers an intriguing warning about the potential dangers of rapidly advancing technologies, especially in genetics, nanotechnology, and robotics. Joy argues that unlike past innovations, these technologies could start replicating by themselves, and become widely accessible and difficult to control, which might pose serious risks to humanity. He also raises concerns that artificial intelligence could become greater than human intelligence, genetic engineering could enable harmful biological manipulation, and nanotechnology could lead to disastrous scenarios such as uncontrollable self-replicating machines. What makes these threats even more alarming is that they may not just be limited to governments or large institutions, but could also be developed by individuals, making regulation much harder. Because of this, Joy suggests that society may need to rethink its approach to technological processes and consider limiting certain developments to avoid potentially irreversible consequences. Overall, the article encourages readers to reflect on the ethical responsibilities tied to innovation and to question whether all technological progress is necessarily beneficial.

Figure 6: Sam Altman Statement. Source: OpenAI

Our table’s discussion first began critiquing and building our argument around the tech optimists’ point of view. Professor Evans noted that Thomas Jefferson’s letter to Michael Krafft in 1804 spoke of very similar key ideas about the possibility for the advancement of science to help provide useful solutions to humanity’s most common problems, from agriculture to daily household tasks. We then discussed the overarching pattern in history where technological discoveries generally improve aspects of human life (electricity, the wheel, etc.). In contrast, we struggled to easily name technologies that only bring a net negative to society as a whole.

One classmate considered the discrepancy of the problems solved by technology beside the problems then made by such technology. Could we be in a period of solving problems we’ve made with AI’s progress?

Per the lead team’s instructions, each table split in half to move to a table arguing for the opposite side. Following the rules closely, each side strongly argued for either a tech-optimist or tech-pessiment viewpoint. Mid discussion, everyone switched which side they argued for.

Figure 7: Invention of the Cotton Gin Accelerates the Expansion of Slavery. Source: Isoureconomyfair

A key argument from the tech pessimists was that technology has increased and can increase the wealth gap, essentially removing a middle class. However, optimists highlighted the example of slavery, in which the invention of the cotton gin didn’t exacerbate slavery but just changed the economics behind the institution. Evans pointed out that reformation generally began in the higher class by recognizing its immorality, which was unprecedented in a society where slavery was accepted as a social norm for centuries. Optimists also noted that while the wealth gap in the US increases, income inequality between countries has significantly decreased and largely so due to the help of technological aids. More detail on this can be found here.

Another unique argument surrounded the “smartness” of humanity in this fight against rapid technological advancement. While pessimists referred to a study where people were found to be dumber than their parents, optimists argued that the way intelligence is measured in such studies is quite poor. Younger generations may have a better understanding of the newer technologies that could display great human intelligence. Our discussion revisited John von Neumann’s quote from Can we survive technology?.

“The most hopeful answer is that the human species has been subjected to similar tests before and seems to have a congenital ability to come through, after varying amounts of trouble. To ask in advance for a complete recipe would be unreasonable. We can specify only the human qualities required: patience, flexibility, intelligence.”

.jpg)

Figure 8: von Neumann. Source: Dept. of Energy