Blogging Team [7]: Seth Lifland, Aryan Thodupunuri, David Hu, Pallavi Mamillapalli, Grace Kitt

Additional News: From FARS to Vibe Research

News Discussion: AI Safety, Competition, and the Anthropic Debate

Professor Evans began by presenting recent news about Anthropic. Anthropic has generated lots of headlines recently, with a mix of negative and positive articles.

We began by discussing some of the more negative articles covering recent changes to Anthropic’s safety policy including announcing that they will remove their flagship safety commitment to pause development if their models reach a certain level of risk. Anthropic announced this recently due to their belief that other companies will not responsibly pause, and so Anthropic pausing would only end up hurting the world.

A student then brought up recent Anthropic news about the announcements they made that they detected Chinese open source model companies using fraudulent accounts to use Claude for training for their own models, in a process known as distillation. The class discussed how these claims are both valid in that the Chinese developers violated Anthropic’s terms of use, but also ironic because Claude is often alleged to be trained on large amounts of copyrighted work (and Anthropic is involved in lawsuits relating to this).

Professor Evans then moved on to discuss the recent news about Anthropic and their interactions with the Pentagon. After Anthropic refused to remove guardrails preventing their models from being used in autonomous weapon systems and in surveillance, the Department of Defense has given them a deadline of 5:01 PM on Friday, February 27, to acquiesce with Defense Secretary Pete Hegseth’s demands, or else Anthropic will be declared a supply chain risk. A brief history of Anthropic’s work with the DoD was given, including Claude being reported to have been used in the military operation to capture Nicolás Maduro.

Being declared a supply chain risk means that Anthropic would be blacklisted from working with the government, and any company with government contracts would be banned from working with Anthropic (which represents a lot of companies).

The class discussed how this seems like a very black-and-white situation: Anthropic can either acquiesce to their models being used for exactly what the DoD wants, or take a massive hit to business viability. There are many potential middlegrounds, such as Anthropic withdrawing from the contract but not being declared a supply chain risk, but as of class time the DoD seems committed to their strong ultimatum.

Updates: After class, the dispute became more concrete. On February 27, Anthropic said the negotiations broke down because the DoW wanted to make two exceptions that Anthropic wanted to keep. The exceptions are no mass domestic surveillance of Americans and no fully autonomous weapons. On March 6, the Pentagon said it had officially informed Anthropic that the company and its products were deemed a supply chain risk, effective immediately. OpenAI announced a Department of War agreement on February 28, then updated it on March 2 to add explicit language against domestic surveillance of U.S. persons. The updates supported the discussion in the class that the issue was not just whether AI companies should have safety policies, but whether those policies can survive pressure from government procurement and national-security demands. [Anthropic statement] [Defense News/AP] [OpenAI statement]

Additional News: From FARS to Vibe Research

Lead Topic: The Adolescence of Technology

Presented by Group 7

Link to Slides

Article: Dario Amodei. The Adolescence of Technology. January 2026. (Continued from Class 12.)

Group 7’s presentation centered on Dario Amodei’s argument that artificial intelligence is currently in a stage of technological “adolescence”. Like an adolescent human, AI possesses growing power and capability without fully developed systems of responsibility, governance, or societal understanding. The presentation examined how rapid AI advancement introduces not only technical opportunities but also political, social, economic, and philosophical risks.

Rather than treating AI purely as software, the discussion framed AI as a civilizational technology — one capable of reshaping institutions, labor markets, and even human identity.

The Odious Apparatus: Power and Governance

The first portion of the presentation explored how AI systems can become dangerous depending on who controls them. AI itself is not inherently harmful, but when combined with centralized authority it could enable large-scale surveillance, autonomous weapons, and highly personalized propaganda campaigns.

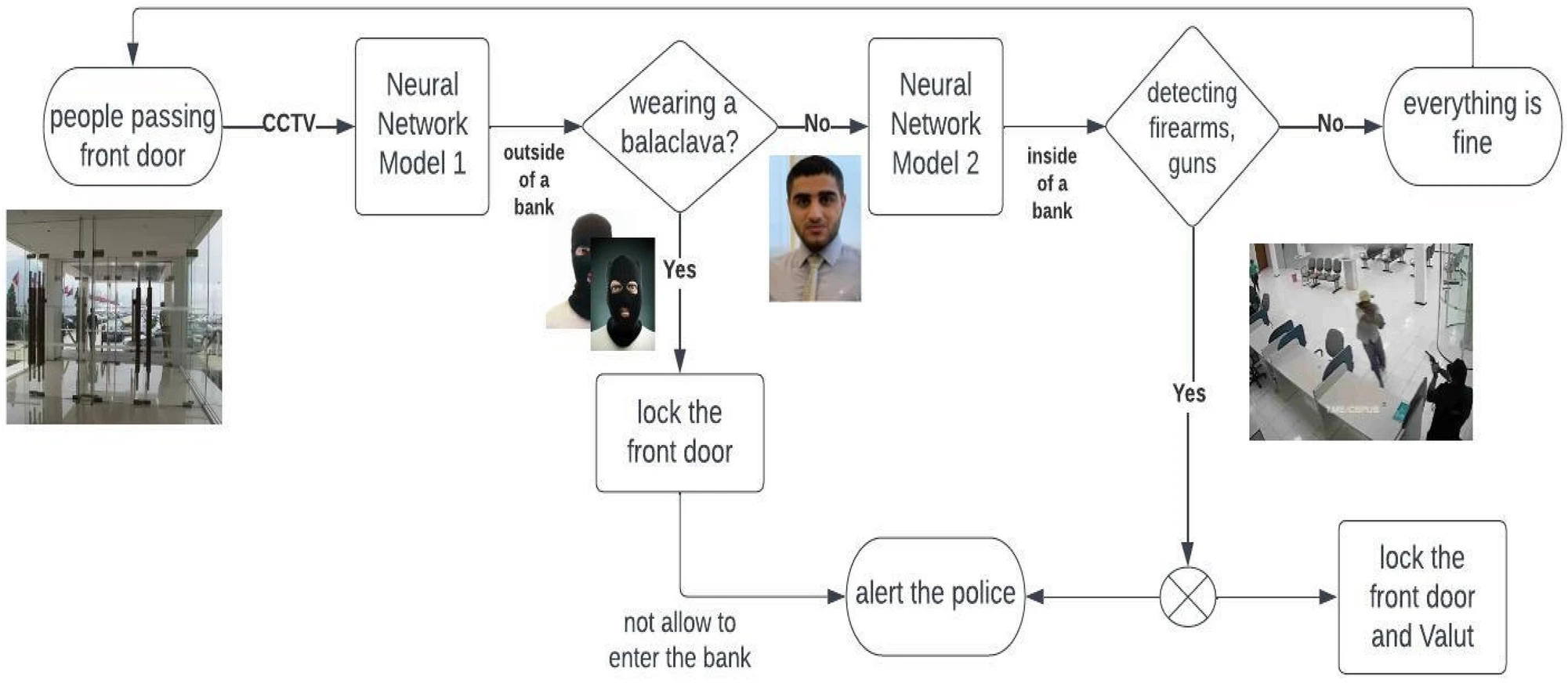

Figure 1: Conceptual overview of AI surveillance systems and centralized data monitoring.

Source: Improving video surveillance systems in banks using deep learning techniques

Students discussed how governments could leverage AI to tighten social control, especially in already authoritarian systems. Predictive policing, large-scale monitoring, and AI-generated media were all brought up as examples of tools that could shape behavior or public opinion at scale. At the same time, several people pointed out that democracies aren’t automatically safe from these risks either. Technologies that start out framed as security measures can slowly expand beyond what they were originally meant for.

The class debated whether technological capability should automatically justify deployment. Some argued that surveillance technologies could genuinely prevent violence or terrorism and therefore save lives. Others raised concerns about historical examples of government overreach, pointing to surveillance programs that expanded far beyond their initial scope.

A recurring theme was trust. Even if a government begins using AI surveillance for legitimate safety reasons, students questioned whether citizens could rely on long-term restraint once such infrastructure exists. The consensus that emerged was not a simple yes-or-no answer but rather the importance of guardrails: transparency, democratic oversight, and clear limits on acceptable use.

Discussion: Is it ever acceptable for a government to use AI surveillance if it claims it will make people safer?

Responses were divided. Some students argued that refusing surveillance entirely may ignore real-world threats. They pointed out that governments already monitor financial transactions, communications, and transportation systems to prevent crime. From this perspective, AI surveillance might just be a more advanced version of systems that already exist.

Others pushed back, emphasizing that AI dramatically scales surveillance capabilities. Unlike traditional monitoring, AI systems can analyze massive datasets continuously and invisibly. Several students worried that once this kind of system becomes normalized, privacy could slowly erode without any clear moment where society explicitly agreed to give it up.

Several participants suggested a middle-ground approach, arguing that surveillance may be acceptable only under strict accountability mechanisms, such as judicial oversight, limited data retention, and clear public transparency. The discussion ultimately highlighted how difficult it is to balance safety with personal freedom, especially when the technology itself keeps getting more powerful.

Black Seas of Infinity: Biological and Social Transformation

The presentation then shifted toward the unintended consequences of rapid scientific acceleration enabled by AI. Advances in biology, medicine, and neuroscience may allow humans to extend lifespan, enhance intelligence, or fundamentally alter human capabilities.

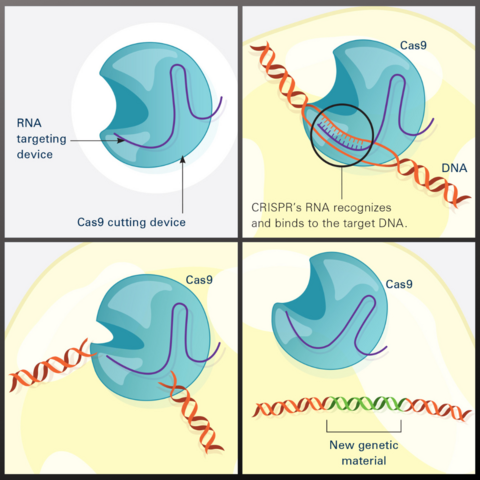

Figure 2: CRISPR-Cas9, a gene-editing technology that provides opportunities for AI to accelerate biological advances.

Source: National Institute of General Medical Sciences

Students explored where society should draw the line between beneficial and harmful biological enhancement. Medical treatments such as lab-grown organs or genetic disease prevention were widely viewed as positive developments. The harder questions start when technology moves beyond treatment and into enhancement. At what point does improving human ability stop being medicine and start becoming redesign?

One example that sparked debate was the idea of removing fear or pain. While it sounds appealing at first, several students pointed out that fear exists for a reason: it helps humans recognize danger and make cautious decisions. Eliminating such traits might produce unintended psychological or social consequences.

The concept of mind uploading generated particularly strong reactions. Many students expressed skepticism that a digital copy of a consciousness would truly constitute survival, instead describing it as a clone that merely believes it is the original person. Others wondered whether gradually replacing parts of the brain over time might make the distinction less clear, raising questions about identity and continuity of consciousness.

Underlying the discussion was a broader concern: technological capability may advance faster than humanity’s ability to define ethical boundaries.

Discussion: Where should we draw the line between beneficial biological enhancement and harmful biological enhancement? Who gets to decide?

Students struggled to identify a clear boundary. Some argued that enhancement should be limited to therapeutic applications such as disease cures or the restoration of normal human function. But that distinction quickly became messy. Someone pointed out that many accepted technologies, such as corrective lenses or cognitive stimulants, already enhance human performance beyond natural limits.

The issue of inequality arose repeatedly. If biological enhancement becomes expensive or restricted, access may be limited to wealthy individuals, potentially widening existing social divides. Some students worried this could create a future where biological advantages compound existing social and economic gaps. Rather than improving humanity as a whole, enhancement could reinforce hierarchy.

There was also disagreement about who should have the authority to make these decisions. Many students felt uncomfortable leaving that power entirely in the hands of corporations or research labs, especially when profit incentives are involved. Others noted that purely democratic decision-making might struggle to keep pace with scientific progress. By the end of the conversation, it became clear that there may not be a universal line at all. Different societies may draw boundaries differently depending on cultural values and ideas about what it means to live a good or meaningful human life.

Player Piano: Economic Disruption and Labor

This major section examined economic consequences of advanced AI systems. The section title references Kurt Vonnegut’s 1952 novel Player Piano, which depicts a society where automation has displaced most human workers. Unlike previous technological revolutions that primarily replaced physical labor, AI may automate intellectual and creative work as well.

Students discussed predictions that large portions of entry-level white-collar employment could be displaced within a relatively short timeframe. This raised concerns not only about unemployment but also about who benefits from increased productivity. If a small number of companies control powerful AI systems, the economic gains could concentrate heavily among those who own the technology rather than being distributed across society.

The class debated whether AI represents a continuation of historical automation or a fundamentally different transition. Some students argued that society has repeatedly adapted to technological disruption, creating new industries and opportunities.

Figure 3: Automation in manufacturing. A historical precedent for labor displacement that AI may extend to cognitive work.

Others suggested AI may differ because it targets cognitive skills traditionally considered uniquely human.

Discussion: Should AI companies be responsible for supporting displaced workers?

The class was divided on whether AI companies themselves should be held responsible for workers displaced by automation. Some students argued that it would be unfair to hold AI firms to a different standard than past industries. Factories and manufacturing companies were not required to subsidize workers who lost their jobs during earlier waves of automation, so imposing that obligation on AI companies might show an inconsistent policy response. Others pointed out practical concerns that the AI sector, while influential, may not yet be large or profitable enough relative to the entire economy to financially support all displaced workers. Additionally, it can be difficult to determine direct causation, whether layoffs are truly due to AI replacement or companies blaming workforce reductions on AI as a convenient explanation.

At the same time, several students suggested that the government should take primary responsibility for supporting displaced workers through potential policies like universal basic income (UBI). However, UBI was not viewed as a perfect solution; some noted that it might simply lift people slightly above the poverty line without addressing deeper questions of meaning and social contribution. A more speculative idea raised was whether AI itself could help design better economic transition policies. Ultimately, the discussion revealed tension between fairness, practicality, and the scale of potential disruption that AI could cause.

Discussion: Is this technological moment fundamentally different from past technological revolutions?

Many students felt that this moment may be fundamentally different from previous waves of technological change. Historically, major innovations, from the Industrial Revolution to mechanized agriculture, primarily replaced physical labor. While disruptive, those shifts often created new forms of employment that relied on human cognitive skills. AI, however, threatens to automate not only manual tasks but also intellectual and creative labor, areas long considered uniquely human. Several students noted that it is rare for a technology to meaningfully compete with mental reasoning, writing, design, coding, and decision-making all at once.

Some students suggested that if AI continues advancing rapidly, it could theoretically replace nearly all forms of human labor rather than just specific sectors. This raises deeper philosophical concerns about human purpose and identity. Others cautioned against assuming inevitability, pointing out that previous generations also feared total displacement, yet society adapted in unexpected ways. Still, the breadth and speed of AI’s capabilities make this transition feel different. Whether it ultimately proves to be another chapter in a long history of adaptation or a true break from the past remains uncertain.

Closing Poll

The class ended with a poll:

Which worries you most and why?

- Loss of jobs

- Authoritarian control

- Extreme wealth concentration

- Loss of human purpose

Surprisingly, no one voted for “Loss of jobs”. The votes were roughly equally split among the other three choices.

Additional References

News (Anthropic):

- Anthropic removes safety pause commitment — CNN

- Detecting and preventing distillation attacks — Anthropic

- Chinese firms accused of Claude distillation — CNBC

- Pentagon-Anthropic standoff over AI guardrails — NPR

- Claude and the Maduro capture operation — Firstpost

- Statement on the comments from Secretary of War Pete Hegseth — Anthropic

- Pentagon says it is labeling Anthropic a supply chain risk “effective immediately” — Defense News/AP

- Our agreement with the Department of War — OpenAI

Lead topic:

- Dario Amodei, The Adolescence of Technology — Full essay (January 2026)

- Kurt Vonnegut, Player Piano — 1952 novel on automation and labor

- Universal Basic Income — Policy proposal for supporting displaced workers

- Class 12 Blog: Adolescence of Technology, Part 1 — Related coverage from the previous class