Blogging Team 2: Amelia Chen, Laxmi Ghanate, Ryan Russo, Shaurya Singh, Matthew Vu

News: RIP Sora

Presented by Team 3 [Slides]

Sora is an AI-generated video content platform, and the news team discussed how it was recently shut down by OpenAI.

Sora produces videos from prompts. It was first released on social media and then as a standalone app trying to build a social media platform. There are some inconsistencies in the videos Sora can generate, including incorrect generation of body parts or frames being cut off too fast.

The CEO of the AI detection platform Copyleaks described Sora as a “content moderation nightmare”. It spread rampant misinformation and could be used to make violent or racist content.

OpenAI stated that they will still be using the video generation model for training content. The larger view of this is that this demonstrates an example of a limit placed on AI development which may emerge as a trend.

Discussion: Should AI tools like Sora be released to the public if content moderation is not fully solved? Why or why not?

The class discussed being against technologies like Sora (but the doorbell videos are really funny and do bring joy to the world!), mentioning other technologies such as Grok AI, which have pushed people away from video technologies.

Some argued the Sora platform should be more heavily moderated, but if Sora videos are being uploaded on external platforms, then it is not Sora’s responsibility to regulate it.

Lead: Model Collapse

Presented by Team 10 [Slides]

-

Ilia Shumailov, Zakhar Shumaylov, Yiren Zhao, Nicolas Papernot, Ross Anderson and Yarin Gal. AI models collapse when trained on recursively generated data. Nature, July 2024.

-

Rylan Schaeffer, Joshua Kazdan, Alvan Caleb Arulandu, and Sanmi Koyejo. Position: Model collapse does not mean what you think, 2025.

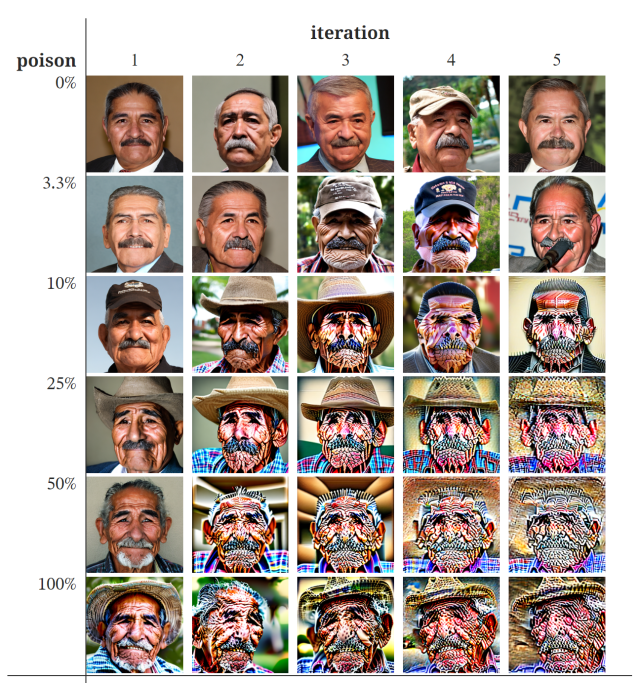

The lead team used the telephone game as an analogy for model collapse. When a model collapses, the same information is repeated, and over time, it degrades and loses its original meaning. The concern with this is that AI-generated data is going to fall into future models’ training data. The overarching issue the lead team brought up was that the model will start overestimating events that happen most often, and this will lead to model collapse.

The lead team presented the optional reading, [Model Collapse Does Not Mean What You Think]((https://arxiv.org/pdf/2503.03150). The paper challenges the common idea that AI systems will eventually enter a “death spiral” where models become completely useless after being trained on synthetic data.

The authors argue that model collapse is often misunderstood. Rather than being a total failure, collapse may appear as a gradual loss of variance in model outputs where models become “empty.” This is due to synthetic data being generally less diverse than human-generated data.

Another thing discussed was that researchers do not agree on a single definition of model collapse. The paper identifies eight different definitions, which makes it difficult to have productive scientific discussions because different studies may be referring to different types of degradation.

The lead team explained that many catastrophic predictions assume unrealistic conditions where training data is completely replaced with synthetic data generated by earlier models. Most AI systems mix synthetic data with large amounts of real human data. However, a real concern exists in the form of diversity collapse, where rare information disappears first. This could disproportionately affect marginalized perspectives and lead to models that repeat common patterns rather than reflecting the diversity of human-generated data. An issue that was talked about was data replacement.

There was an assumption that models would slowly diverge until collapse, when the expected reality was that small portions of a model would become synthetic. Replacement assumes old data will be deleted and replaced by synthetic data, and accumulation is when data is compiled. Diversity collapse was described as the erasure of tails, where you imagine medical models that never diagnose rarer conditions. Solutions to the issue of data replacement mentioned were curation and filtering, watermarking, and human archiving.

Discussion: What are some different metrics you can think of to identify “model collapse”?

The class discussed several possible ways to identify model collapse before it becomes severe.

One way was to compare the distribution of human-generated data to AI-generated outputs. If models began repeating the same phrases, patterns, or styles more frequently, it may indicate that diversity is shrinking. Another metric mentioned was the disappearance of tail data, where rare examples or uncommon phrases gradually disappear from model outputs. Students also referenced the concept of hallucinated data, where models begin generating outputs that were not present in the original dataset. Changes in population risk were also discussed, meaning the model’s performance on real human data becomes worse over time as it is increasingly trained on synthetic data.

Which of the definitions of model collapse do you think is the most important?

Different students emphasized different definitions of model collapse. One student argued that changes in scaling laws are the most important because they would require more computing, data, and cost for models to achieve the same performance.

Another student suggested that the most important definitions are the ones related to diversity collapse, such as shrinking tails, hallucinated data, and entanglement of modes. These definitions captured the idea that AI systems may become increasingly repetitive or produce “AI slop”, where models reinforce the same patterns rather than reflecting the diversity of real human-generated information. Cognitive surrender was also mentioned, which means to give up thinking to AI. This is especially damaging when AI is not producing accurate, representative information.

What does model collapse mean for the legitimacy of any AI-produced data interpretation?

The discussion also explored how model collapse could affect the legitimacy of AI-generated interpretations of information. If models increasingly rely on synthetic data, they may begin reinforcing patterns created by earlier AI systems rather than reflecting real-world knowledge.

Some students compared this to how students are taught to verify sources and not rely solely on platforms like Wikipedia. Similarly, AI outputs should likely be treated as one source of information rather than a definitive authority. This raises concerns that if AI-generated content becomes widespread, models may slowly drift away from accurately representing real human perspectives.

Is there a realistic way to force LLMs to account for the extraordinary samples? How do we prevent history from being erased?

Students discussed whether it is possible to force AI models to preserve rare or unusual data points. One concern was that models trained primarily on probability-based patterns will naturally favor common examples while ignoring rare ones. This could lead to the erasure of niche knowledge, marginalized perspectives, or unusual historical events. Some potential solutions mentioned included curating and filtering training data, watermarking AI-generated content so it can be excluded from training datasets, and preserving archives of human-generated data created before the rise of generative AI. These strategies may help ensure that models continue to learn from diverse human sources rather than only synthetic data.

Professor Evans’ comments: Professor Evans is more worried about cultural collapse than model collapse. Generative AI is one factor exacerbating cultural collapse, but it is also a result of centralization of media and economic forces that impact artists and creators.

Evans pointed out the tension between capturing parts of the distribution that are represented by only a few training examples and avoiding memorization, the topic for the next class.

One of the slides raised the question of what is means for a source to be “legitimate”. He compared the legitimacy of AI-generated knowledge to sources like Wikipedia, which intend capture large amounts of “objective” human knowledge even when there are disagreements or controversies. In that sense, idealized models can objectively capture and summarize views of humanity, at least inasmuch as they are trained on a corpus that reflects this.